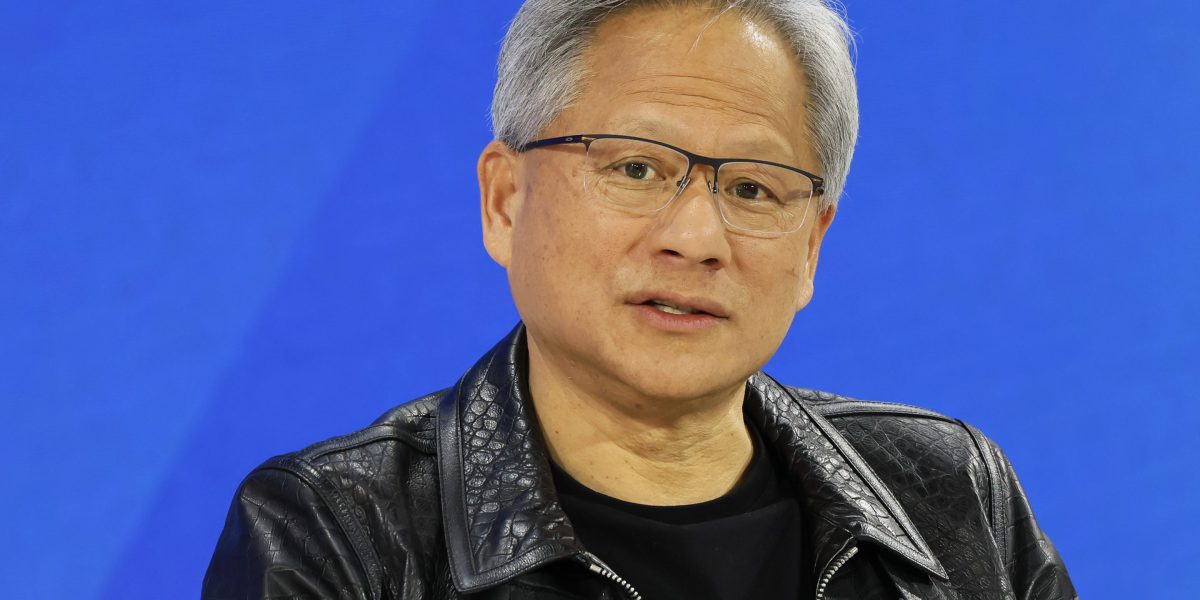

Nvidia boss Jensen Huang is starting to feel like Atlas with the weight of the world—or at least AI—bearing down squarely on his shoulders.

The CEO and founder of the $2.9 trillion semiconductor giant spoke openly on Wednesday about the tension he’s feeling, offering a glimpse into the pressure he faces to crank up the supply of microchips capable of training the latest AI systems like the successor to OpenAI’s GPT-4.

Customers see his products from the perspective of a player in a zero-sum game—each chip they receive means both an advantage for them as well as a disadvantage for rivals who struck out. Huang’s job is to walk this tightrope, balancing the allocation of supply as best he can in order not to endanger client relationships.

“Delivery of our components—and our technology, and our infrastructure and software—is really emotional for people, because it directly affects their revenues, it directly affects their competitiveness,” he told a tech conference hosted by Wall Street investment bank Goldman Sachs.

What Huang exactly meant by “emotional” is unclear, and Nvidia declined to comment further when reached by Fortune. But one thing is certain—customers—especially the three main cloud hyperscalers Microsoft, Google and Amazon, which each require a vast supply—realistically have nowhere else to turn. Nvidia controls 90% of the market.

Speed is everything in the AI race

As founder and CEO, Huang takes his responsibility to key customers personally. In April, he hand delivered the world’s first DGX server equipped with its upgraded Hopper series AI training chip, the new H200, to OpenAI chief executive Sam Altman and president Greg Brockman.

First @NVIDIA DGX H200 in the world, hand-delivered to OpenAI and dedicated by Jensen “to advance AI, computing, and humanity”: pic.twitter.com/rEJu7OTNGT

— Greg Brockman (@gdb) April 24, 2024

And in his quest to topple OpenAI from the industry summit, Elon Musk recently revealed plans to purchase 50,000 H200s over the next few months to double the compute at his xAI training cluster in Memphis, where his rival AI model, Grok-3, is currently in training.

“Our fundamental competitiveness depends on being faster than any other AI company. Thiss is the only way to catch up,” Musk explained in a recent social media post. “When our fate depends on being the fastest by far, we must have our own hands on the steering wheel, rather than be a backseat driver.”

That’s why he was willing to risk the ire of Tesla shareholders after allocating Nvidia chips to xAI that should have gone to training the carmaker’s self-driving software system, FSD. It also explains how xAI was able to bring online the largest single compute cluster in the world, powered by 100,000 Nvidia H100 graphic processing units, in the span of just three months.

Whether Musk is a preferred customer is not known, but Nvidia has revealed that just four data center clients were responsible for nearly half of its Q2 top line revenue.

“If we could fulfil everybody’s needs, then the emotion would go away, but it’s very emotional, it’s really tense,” Huang told the Goldman conference on Wednesday. “We’ve got a lot of responsibility on our shoulders and we try to do the best we can.”

Supply constraints set to ease going forward

Huang is not being facetious. At the end of last month, Nvidia’ posted fiscal second quarter results that Wedbush tech analyst Dan Ives called nothing short of “the most important tech earnings in years”.

One of the core concerns that both investors and customers were most keen to hear addressed included any additional information on the rollout and ramp-up of Blackwell, the next series of AI chips after Hopper, following reports of delays.

During the investor call, Huang confirmed changes had to be made to the production design template, or “mask”, to improve the yield of chips printed on each silicon wafer. Nonetheless, he insisted he would still be able to ship several billions of dollars’ worth of Blackwell processors before the fiscal fourth quarter ends in late January.

Unlike integrated device manufacturers like Intel or Texas Instruments, Nvidia outsources all semiconductor fabrication to dedicated foundries that specialize in production technology, primarily TSMC. The Taiwanese giant leads the industry when it comes to efficiently miniaturizing transistors, the building blocks of the logic chips printed on each wafer.

That means however that Nvidia cannot quickly reply to demand spikes, especially since these chips go through months of complicated process steps before they are fully fabricated.

Pressed about the issue, Huang told Bloomberg Television late last month that constraints were easing.

“We’re expecting Q3 to have more supply than Q2, we’re expecting Q4 to have more supply than Q3 and we’re expecting Q1 to have more supply than Q4,” he said in the interview. “So I think our supply condition going into next year will be a large improvement over this last year.”

If true, then Huang can finally look forward to fewer tense moments with less emotional customers in the not too distant future.

Data Sheet: Stay on top of the business of tech with thoughtful analysis on the industry’s biggest names.

Sign up here.